1. Introduction

Business services and capabilities are socio-technical in nature – that is they include people and not just Information Technology. Cyber-attacks are also socio-technical; attackers will look to identify the weakest link in defences and do not care whether they use a technical exploit or a human one to achieve their goals.

Human nature dictates that people will often be the weakest link in an organisation’s cyber security defences and yet they have access to the front door. As the cyber threat has grown over recent decades, the response has often been to invest in new technical tools and solutions which claim to deliver advanced protection capabilities. This gives the impression of stronger technical security whilst actually leaving the front door wide open. Technical solutions need to work in conjunction with the people who use the system and operate the capability – a socio-technical system for cyber defence and need to consider business resilience measures for when, not if, an attack occurs.

2. Understanding the Risk

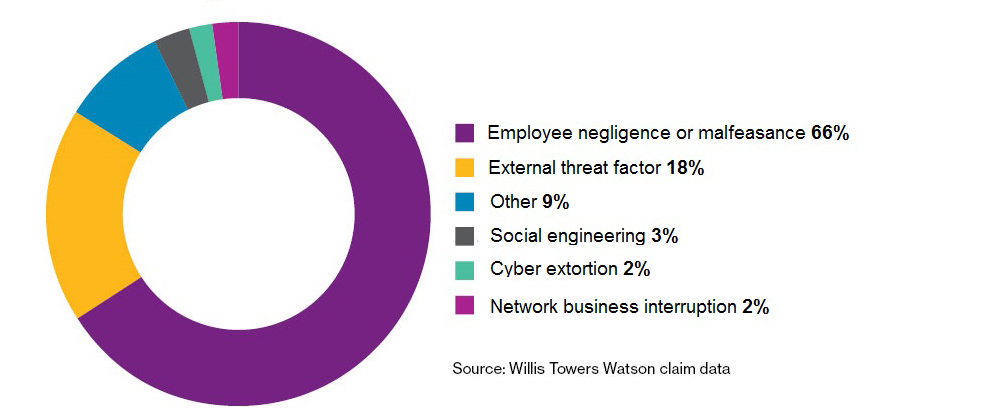

Whilst the precise figure varies, there is broad agreement that human actions, whether malicious or accidental, are at least in part the cause of a significant percentage of cyber incidents. Wills Towers Watson research (Figure 1) suggests that that number is 66%.

Figure 1. Wills Towers Watson research suggests 66% of breachers are due to employee negligence or malfeasance

Figure 1. Wills Towers Watson research suggests 66% of breachers are due to employee negligence or malfeasance

This may be, for example, inadvertently visiting a compromised website or clicking on a link in a phishing email; or not following a documented operational process leading to damage to systems.

Humans make mistakes, and therefore human action as a cause of cyber incidents will never be totally eradicated; however, given the significant proportion of breaches which involve human actions, it is important to understand why these actions occur and put in place effective controls to manage the risk. Simply requiring employees to complete more online security training is unlikely to be effective.

Before investing in activities to change behaviours or reduce risk, it is important to understand where human actions potentially lead to cyber risk and what level of risk that presents. This means that investment can be targeted at appropriate areas, and the return on investment can be tracked, providing evidence to support further activities or risk management decisions.

Organisations need to understand where humans interact with systems or capabilities in a scenario where there is potential for actions to lead to cyber effects. This requires developing socio-technical models of how a capability is delivered in terms of people, process and technology. Human interactions can be assessed in terms of the level of risk they present, and any action can then be appropriately targeted to treat the risk or take other risk management actions.

3. Understanding what drives human actions

Where the risk assessment identifies risks associated with human interactions in a socio-technical system it is important to target any mitigating actions appropriately to ensure that the desired effect in terms of risk reduction is achieved.

The knee-jerk reaction when confronted with a need to improve human aspects of cyber security is to increase the amount of training provided to staff. This is often in the form of online courses or additional policy documentation which staff are asked to read.

This approach ignores the drivers of human behaviour and is unlikely to be effective.

3.1 Capability, Motivation and Opportunity Driving Human Behaviours

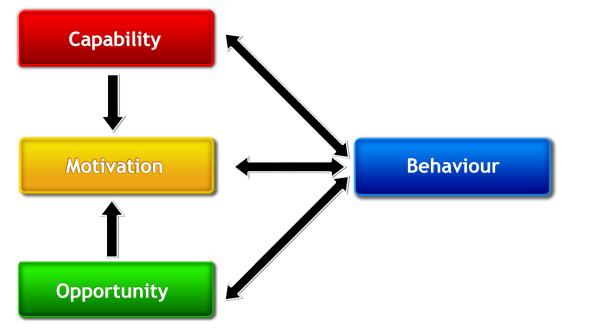

The COM-B system recognises human behaviour as an integral part of any interacting system or process, and provides a framework for understanding behaviour which allows the root cause to be identified and, subsequently, appropriate interventions to be implemented to drive behavioural change. In essence, the observed behaviour is broken down into three essential conditions (Figure 2):

a. Capability – this is the capacity of the individual to engage in an activity, including having the required knowledge and skills, including training.

For example, the analysis may identify that the IT systems involved in the process are not suitable and therefore people are forced into unfamiliar or risky behaviours; or that individuals are being asked to do roles for which they do not have the appropriate training or qualifications.

b. Motivation – this is the desire of the individual in terms of what they want to achieve, and includes habitual processes as well as analytical decisions.

For example, there may be competing pressures on people in terms of delivery. Whilst they may well want to do the right thing from a security perspective, if the pressure from senior management is focused on achieving an operational target, then this will flow down and drive risky behaviours to achieve the outcome at the expense of security processes.

c. Opportunity – these are factors external to the individual which make behaviour possible or lead to a behaviour.

For example, whilst people may understand the need to behave in a certain way, such as not using mobile phones in certain areas, if the appropriate indicators are not in place to drive that behaviour such as signage to inform people of phone usage, then it is unlikely that the positive behaviour will occur.

Figure 2. The COM-B model for analysing the root cause of behaviours

Figure 2. The COM-B model for analysing the root cause of behaviours

Taking the examples above, each of the examples for Capability, Motivation and Opportunity could lead to the same risky behaviour, but each would require very different actions to create a positive change and mitigate the risk.

Identifying the root cause of behaviour allows targeted interventions to be implemented. System and risk owners can therefore have assurance that effort is directed at the right areas and that they will achieve return on investment.

4. Targeted action for positive behavioural change

The approach above allows the root cause of a behavioural risk to be identified and addressed with targeted mitigating activities. Rather than simply implementing more training, it allows activities to be developed which will target the underlying cause and therefore increase the chances of positive behavioural change.

Traditional approaches focussing on increasing the volume of training will be ineffective and may in some cases even prove counterproductive as they can drive employees to become disillusioned – seeing security as a blocker to be overcome – and therefore reduce motivation to implement correct security procedures.

5. Conclusions

Cyber incidents are socio-technical in nature and organisations therefore need their cyber defences to mirror this. Adversaries will exploit the weakest link in the chain and therefore investing in technical solutions where there are human behaviours that an adversary can exploit will not achieve a reduction in risk proportionate to the investment.

It is crucial to adopt a holistic approach to risk management and cyber defence and resilience involving all of people, process and technology.

Leonardo’s approach involves developing an understanding of how an organisation’s critical capabilities are delivered, and in particular, where humans interact with systems and processes in a way which could present risk to the organisation. Where risks are identified, it is important to understand the root cause of the behaviour so that mitigating activities can be highly targeted and are therefore likely to be effective.

Our approach to this uses the COM-B framework. We suggest that this approach will drive positive behavioural change in an organisation, providing return on investment in terms of risk mitigation, and reducing the organisational exposure to cyber incidents and when coupled with explicit business continuity planning will increase organisations’ resilience.

For further information about how Leonardo can help you address the human aspects of cyber security, please email our UK Cyber Security team.